Let your assistant handle the boring transcodes.

If you've ever asked ChatGPT or Claude to convert a folder of EXRs to a ProRes preview, you know how it ends — guessed FFmpeg flags, mystery gamma shifts, files that "finished" but won't play.

Sequency now talks to your AI assistant directly. Same engine you already trust, same color, same codecs — your agent finally gets the file right the first time.

Real exports. Real engine. No hallucinated flags.

Why an editor should care

You're not writing code. You're a colorist with 47 BRAW clips, a compositor with three rounds of EXR notes, an editor staring down a director's cut at midnight. The CLI is for the assistant that's about to help you get there.

"Convert these to ProRes."

The assistant invents an FFmpeg command. Half the flags are wrong. The output plays back green. You spend an hour debugging an AI's hallucination instead of cutting your scene.

"Convert these to ProRes."

The assistant asks Sequency what it can do, looks at the actual files, shows you the plan, runs it through the same export engine the app uses, and confirms the file landed on disk before saying it's done.

A file you can actually use.

Right color space. Right codec. Right frame rate. No silent gamma shift. No 0-byte mystery file. The next step in your day is grading the shot — not fixing the export.

Same Sequency. Two ways in.

The CLI isn't a stripped-down toy. It's the same export stack the app uses, exposed so an AI assistant can use it as carefully as you would.

The Sequency app

Drag a folder. Pick a preset. Hit export. The native Mac interface you already use, with the inspector, the previews, and the batch queue.

- Drag-and-drop with smart sequence detection

- Visual color routing, ACES & OCIO

- Pause, resume, batch — in your hands

The Sequency CLI

A machine-readable contract. Your agent inspects the footage, plans the conversion, dry-runs it, exports through the same engine, and verifies the file — all in plain JSON.

- Live capability manifest — no guessed flags

- Dry-run JSON before any byte is written

- Structured success, structured errors, real verification

Your agent sees the export plan before it touches the footage.

From prompt to proven export

The same six steps a careful human would take — only the assistant is doing them, and Sequency is verifying every one.

Check the room before you cook

The agent asks Sequency whether the current session can actually write video and image files. If a sandbox is blocking AVFoundation or Core Image, it fails loudly here — not three minutes into a render.

sequency agent doctorRead the menu, don't guess flags

Instead of memorizing command syntax, the agent fetches a live capability manifest: every supported codec, container, image format, EXR compression option, audio mode, and camera control — versioned to the build.

sequency agent manifestLook at the footage first

Before choosing settings, the agent reads the actual file or sequence. Frame rate, resolution, color space, codec, alpha, audio, camera metadata — pulled from the source so decisions are grounded in reality.

sequency info --file /shots/A001C006.mxf --jsonShow your work before you write

Sequency returns the prepared route as JSON: codec, frame rate, resolution, audio behavior, alpha behavior, color conversion, and camera-decode settings. You can read it. So can the agent. Nothing has been written yet.

sequency convert \

--input /shots/A001C006.mxf \

--output /exports/A001C006.mp4 \

--codec h264 --dry-run --jsonRun through the real engine

The conversion runs through Sequency's same export stack — image-sequence routes, video routes, MKV, BRAW, ARRI MXF, custom MXF, audio defaults, alpha defaults, and OpenColorIO-backed color management.

sequency convert \

--input /shots/A001C006.mxf \

--output /exports/A001C006.mp4 \

--codec h264 --jsonConfirm the file is actually there

A careful assistant checks that the expected video file or image-sequence directory was created and is non-empty before reporting success. No more 'finished with errors' that quietly produces nothing.

{ "status": "completed", "outputPath": "/exports/A001C006.mp4" }Controls your agent can actually reach

For BRAW and ARRI sources, the dry-run route reports decode intent, look usage, exposure, and SDK color spaces — so AI can adjust them without inventing flag names.

Results agents can act on

Human terminal output is great for people. Agents need stricter ground. Every JSON command returns a structured payload — for plans, completions, and failures alike. Errors include a code, an exit code, and a remediation message so the next step is obvious.

Plan, before pixels

{

"status": "dryRun",

"route": "video → video (H.264)",

"input": "/shots/A001C006.mxf",

"output": "/exports/A001C006.mp4",

"frameRate": 23.976,

"audioOption": "copyFromSource",

"cameraSettings": {

"decodeIntent": "nativeParity",

"exposureIndex": 800,

"whiteBalanceKelvin": 5600

}

}Done, and verified

{

"status": "completed",

"outputPath": "/exports/A001C006.mp4",

"bytesWritten": 184562112,

"framesEncoded": 240,

"elapsedSeconds": 11.4

}Failure with a next step

{

"status": "error",

"error": {

"code": "inputNotFound",

"message": "Input was not found.",

"exitCode": 2,

"remediation": "Check the path and run info or dry-run again."

}

}Install it where your agent already works

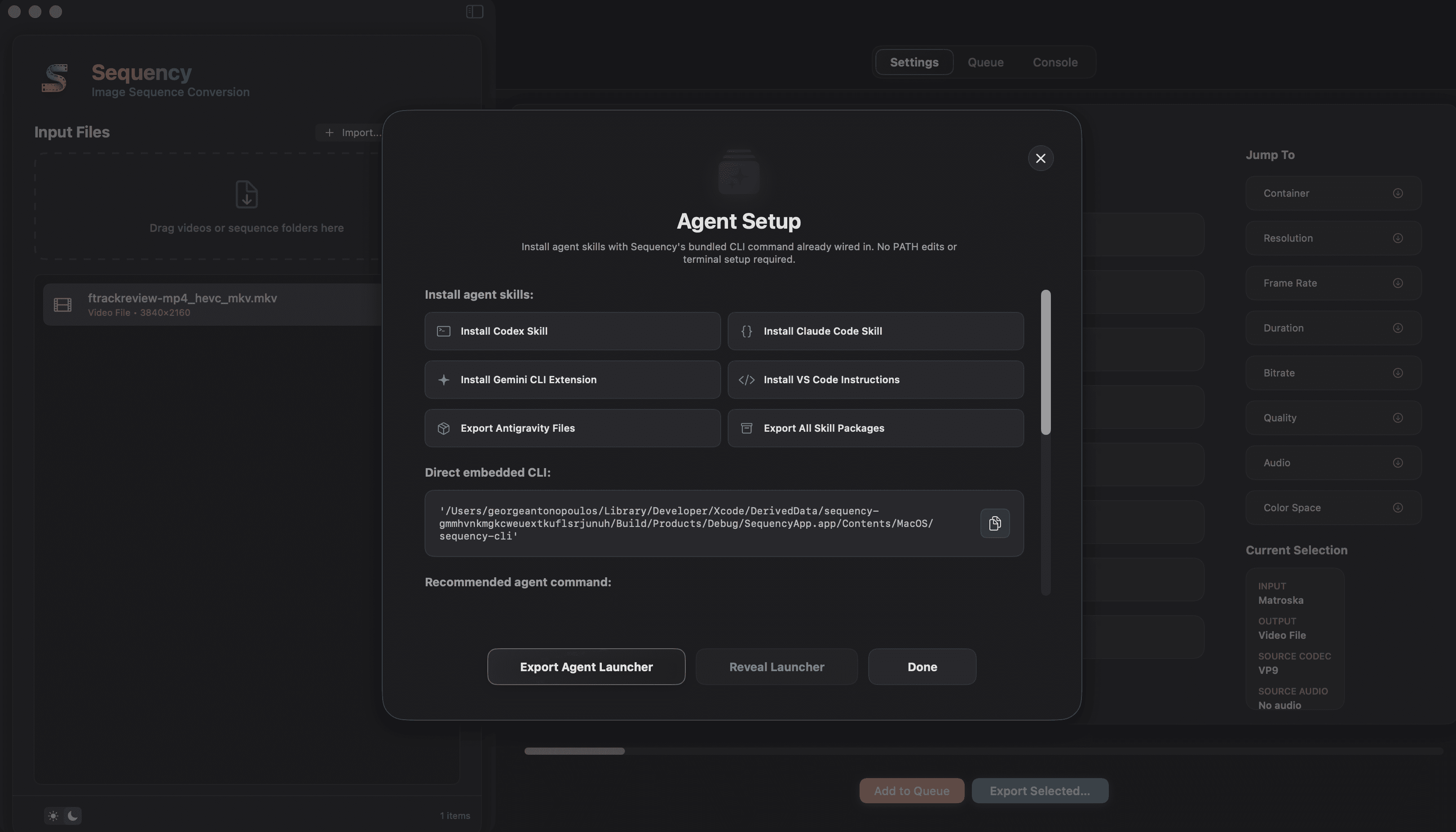

Sequency's Agent Setup window installs or exports ready-made packages with the active CLI command already wired in. No PATH guessing. No stale aliases. No manually copying app-bundle paths.

Codex

Installs the Sequency skill into Codex skill roots. Triggers on /skills, $sequency, or natural language.

Claude Code

Installs the Sequency skill plus a /sequency slash command for one-keystroke invocation.

Gemini CLI

Installs a Gemini extension with a /sequency command, ready in your terminal.

VS Code Copilot

Exports workspace .github instructions and a /sequency prompt file you can commit alongside your project.

Antigravity

Exports conservative instruction files for Antigravity workflows. Native packaging will follow.

Export all

Bundle every package for offline review, IT distribution, or pushing to your team's private registry.

Each package is marked with a stable Sequency ID, so reinstalling never duplicates skills. One Sequency skill — current command, canonical location.

Routes through the same export stack

Image-sequence to video, video to video, video to image-sequence, MKV paths, BRAW and ARRI MXF decode, custom MXF, alpha-aware image outputs, audio-aware video defaults, and OpenColorIO-backed color management — same as the app.

Sandboxed agent sessions may need host permission for AVFoundation/Core Image exports. The agent doctor command surfaces this before any real export is trusted.

Stop debugging your assistant's transcodes.

Download Sequency, open Agent Setup, install your skill, and ask in plain English. The boring part is over.